Demystifying Data Wrangling: A Guide to the Six Essential Steps

Table Of Content

- What is Data Wrangling in Data Science?

- Essential Steps in Data Wrangling

- Data Wrangling Benefits

- Tools and Techniques for Data Wrangling

What is Data Wrangling in Data Science?

Data wrangling, which is also known as data munging, is a pivotal step that precedes data analysis and is crucial in data analysis. It is the process of putting down initial features and structures that enable easier analysis by cleaning, formatting and enriching the data. This includes debugging, checking for wrong values and correcting them, checking for inconsistencies or duplicate records and deleting them.

However, data is arranged most of the time by converting it to a tabular form that is easier for analyst tools to handle. The enrichment phase thus appends more information to the dataset, making it more useful and even putting it through a quality check. Data wrangling greatly contributes towards making analysts and data scientists get useful information from raw data, making the entire analytics process easy.

The importance of data wranglinghas significantly increased in 2024 due to several key factors:

- Variety and Data Volume Management: Easy to scale up to a greater workload and accommodative to a variety types of data flocking systems like social media and IoT devices.

- Supporting AI and Advanced Analytics: Delivers structured, clean, and accurate data, which is vital for embedding AI and machine learning algorithms.

- Faster Decision-Making: This reduces time spent on data preparation, hence allowing for faster analysis that can help businesses make the correct choices.

- Data and Compliance Governance: Organises and cleans data as per requirements of GDPR and CCPA to ensure data is properly handled.

- Improving Accuracy and Quality of Data: One can use this method to enrich the quality of knowledge, which contributes to refining the analytics based on the data.

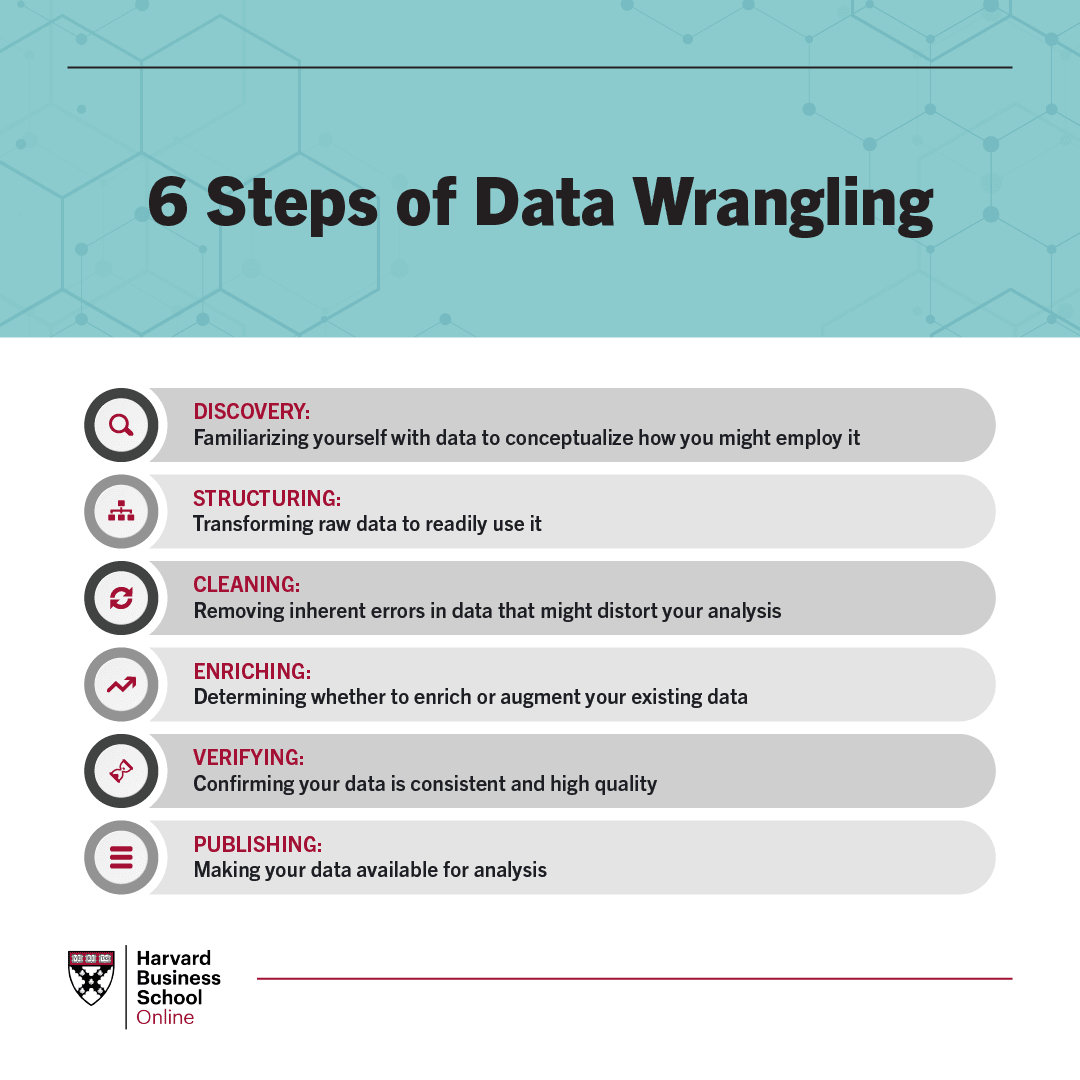

Essential Steps in Data Wrangling

Here’s a detailed breakdown of the essential data wrangling steps involved in the process:

1. Data Discovery

Data discovery is one of the phases that mainly involves the cleaning or data preprocessing step of big data. It revolves around the assessment regarding what type of data is to be collected, where and in what form it is accessible, and its plausible weaknesses. Once you understand what data you have and what goals they should help achieve, you will be able to devise a good plan for the next steps. This stage is for screening the data, trends, and regularity and looking for glitches, such as missing values or other extreme inconsistencies, which do not follow a normal distribution in the given data set. This first evaluation will dictate the subsequent steps ofdata wranglingand will guarantee that the output fits your requirements.

2. Data Structuring

Raw data is usually collected in a disrupted and unfriendly format useful for analysis. Data structuring implies the act of putting the unstructured data in an organised way that makes them suitable for use. This step involves organising raw data by categorising data points straight into a record, a table or a spreadsheet. It is also possible to add more consequent columns, categories, and headings, which contribute to enhancing usability and characterising the given data so that it is more comfortable to work with analysts.

3. Data Cleaning

Data cleaning is the process that helps detect and rectify various problems in a data set. The step involves checking the measurements for more credibility of the final dataset as well as removing bias.

During data cleaning, you may encounter tasks such as:

- Removing observations that may influence the results of the analysis.

- Convert data types in an effort to improve consistency and quality.

- Finding the values and filtering out the duplicate records of the values

- Dealing with the organisational problems and making sure that the data used is correct.

- While cleaning this data, more reliability is generated, and the data becomes more informative for the analysis.

4. Data Enrichment

Data augmentation is a process of improving the data set through a provided context or by adding new information. This process always converts the possibly cleaned and formatted data into other types or formats that can be more insightful. Data enrichment includes aggregational activities, where data gathered from different sources is accumulated into a bigger data set or the creation of new variables or features that can point out another attribute of the data set. However, data enrichment is not always mandatory; it depends on the data wrangling project in question.

5. Data Validation

Data validation simply refers to the process of finding a method that will determine if the collected data is in the appropriate format and conforms to a certain set of specifications and conditions. This step involves engagement in activities coding and the use of several cyclical programs to assess the quality and accuracy of the data. Data validation will assist in identifying any issue or problem that may remain on the data before the analysis. Sometimes, some discrepancies may arise, leading back to the data wrangling process to correct once again.

6. Data Publishing

The last step requires publishing the clean, structured and enriched data to conform to the layout meant for analysis and reporting. They may refer to its storage in a database, data warehouse, or some other data holding facility.

When you implement these fundamental processes, it is possible to gain high-quality data results as admission for data analysis for decision-making. Lastly, as data wrangling is cyclical, you may find yourself circling back to a number of steps as you interact with the data or as other issues are discovered.

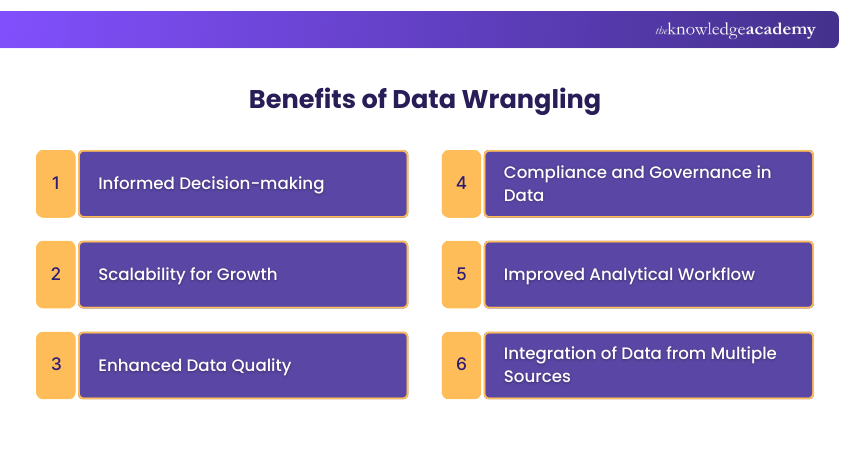

Data Wrangling Benefits

Here are the key benefits of data wranglingexplained in detail:

1. Improved Data Quality

Data wranglingimproves the quality of the data used for the analysis. Raw data is almost always contaminated by errors, irregularities, gaps, and repetitions that can bias the analysis and produce misleading information. In cleaning and validation, data wranglingembraces the following challenges: through the processes, the data used for analysis is accurate, consistent, and reliable. The accuracy of data is highly important for intangible knowledge resources and obtaining accurate outcomes.

2. Improve Analytical Efficiency

Data wrangling improves the efficiency of data preparation at the analytical stage. Meaningful use of automation tools, selection of best approaches and advanced data cleansing help data scientists and analysts spend less time in mundane data preparation. Such efficiency brings to the improvement of the overall analytical process, so analysts can study more data within the shortest time possible.

3. Use of Customised Data Processing Techniques

Machine learning models have to feed on structured and clean data to give the right answers. Data wranglingcan be applied prior to techniques like predictive modelling, customer segmentation, or trend analysis; preparing data in perfect condition to be used in these higher-level applications avoids incidences of creating false results.

4. Integration of Data from Different Sources

Modern data originates from a variety of sources across the business, including IoT, social media, and ERP systems. The wrangling of data from different sources enables integration, harmonisation of formats and the solutions to any differences to end up with the dataset. This integration is important for the analysis of the given materials and the incorporation of all the necessary information into the final view of the discussed topics.

5. Data Privacy and Compliance

As datasets are growing rapidly,data wrangling is important to guarantee that data will be saved and processed in compliance with legal and ethical constraints such as GDPR or CCPA. An organisation should always follow data governance policies as per its requirements, which reduces the chances of getting in trouble with the law due to mishandling of personal and sensitive information.

6. Empowered Decision-Making

Data wrangling is the process of preparing raw data to get to a form that can help improve decision-making. This process makes data cleaning, organisation, and enrichment possible. It assists organisations in deriving meaningful data from the primary data that is obtained. This results in better strategies, enhanced work processes, and overall increased market positioning and capability.

7. Scalability

The amount of data increases as the business grows,data wrangling tools and processes are used to meet the increasing demand. Effective use of tools improves thedata wrangling processes to support long-term growth and data-driven decision-making.

Tools and Techniques for Data Wrangling

The preparation of data is an important step before analysis and interpretation. It involves turning raw data into a form that is easier and more convenient to process. There are diverse data wrangling tools that can be used to help simplify this process. Here are some of the most popular ones:

- Excel Power Query: A basic tool in Excel for data work. It helps with easy data cleaning.

- OpenRefine: An automated tool for data cleaning. It needs coding know-how. It’s good for big data jobs.

- Tabula: A tool to pull out data from files like PDFs. Tabula works with data in neat files.

- Google DataPrep: A Google tool for looking at, cleaning, and getting data ready. It is used for online data work.

- Data Wrangler: Made by Stanford. It cleans and changes data. It turns messy data into tidy data.

- Trifacta: An online tool for managing data. It helps clean and change data. Used for big and tough data jobs.

- Pandas: A Python library for managing the data structures and operations needed for quick data manipulation, this is the tool for the data wrangling tasks typically found in programming.

- D3.js: A JavaScript library for creating interactive data visualisations in web browsers. It is used to visualise and explore cleaned data.

Master Data Wrangling with Jaro Education

To master Data wrangling, you must enrol yourself in the following program by a leading institution through Jaro Education:

Chandigarh University offers an online MCA program. It gives students the skills needed to make software, code systems, and manage databases. The program includes real-life tasks. This helps students do well in IT jobs and keep up with tech changes.

Symbiosis School offers an online M.Sc. in Computer Applications. This course is for tech students who wish to lead in tech fields. It is fully online, letting students learn at their own speed.

SSODL offers an online MS in Data Science that gives you a solid grounding in data analysis, including advanced statistical methods and machine learning techniques. This online MS in Data Science program prepares graduates for careers analysing business data.

Manipal University Jaipur’s online MCA program provides you with specialised knowledge in the realms of IT, such as cloud computing, application development, and the big data community. With a mix of theoretical expertise and practical training, this course sets you up for various positions in the IT industry.

IITRoorkee provides an excellent program in data science and AI for working professionals, which offers both practical and hands-on experience. It covers data analysis, machine learning, AI applications, and more.

Manipal University Jaipur’s online BCA degree is a great foundation for those who want to study software development and computer applications. This program focuses not only on programming and networking but also touches on database management and web development techniques.

Final Thoughts

Data wrangling is an essential part of the data analysis process. This ensures the data is clean, structured, and reliable to analyse. These six important data sources — data discovery, data structuring, data validation, and data publication — form a foundation for predictive insights, more informed decision-making, and better results. If you’re new to data science or a pro, improving your data modelling can have a major staging impact on your analytic power. Use appropriate data cleanup tools like Excel Power Query, and OpenRefine, or more advanced tools like Pandas and Trifacta to minimise costs and enjoy high-performance data preparation.

Frequently Asked Questions

- Data Discovery

- Data Structuring

- Data Cleaning

- Data Enrichment

- Data Validation

- Data Publishing

Find a Program made just for YOU

We'll help you find the right fit for your solution. Let's get you connected with the perfect solution.

Is Your Upskilling Effort worth it?

Are Your Skills Meeting Job Demands?

Experience Lifelong Learning and Connect with Like-minded Professionals